Introduction

IoT device use has recently increased in applications such as agriculture, smartwatches, smart buildings, IoT retail shops, object tracking, and many more. These Internet of Things devices generate a large amount of data, which is subsequently transported to the cloud to be analyzed.

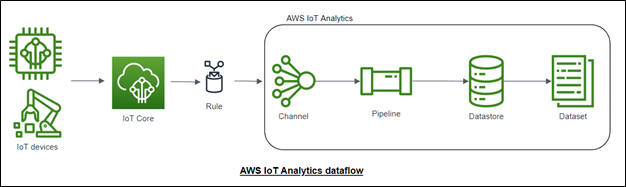

Because IoT data is generally unorganized and difficult to evaluate, experts must first format it before beginning the analytics process. AWS IoT Analytics will enable you to convert unstructured data to structured data and then analyze it.

This blog will show you how to create a dataset with AWS IoT Analytics. We need to create a channel, a data pipeline, and data storage to create a dataset.

Creation of Channel

- Log in to AWS Console and type AWS IoT Analytics into the search box, then pick the IoT Analytics service. In the IoT Analytics service, choose the channel and click the Create Channel button in the upper right corner.

- Enter testing_channel as the Channel name, then select Service managed storage as the Storage type, which means AWS IoT Analytics will manage the volumes on the user’s behalf. Data will be stored in S3 in the background. Then, click Next.

- On the Ingesting messages from IoT core option to receive messages in a particular topic, enter the MQTT topic as testing/# in the topic filter.

- Click Create New in the IAM Role option, enter testing_channel_role as the role name, and then click Create Role. Then click next and finally choose the create channel option; the channel will be created in a short time.

- Cloud Migration

- Devops

- AIML & IoT

Creation of Data Store

- Choose Data Stores in the AWS IoT Analytics console, then click Create Data Store

- In the top right corner, enter the Datastore ID as testing_datastore, then click next.

- Select Service managed storage as the Storage type, then click next, and choose data format as JSON.

- Click next, leave Custom data partitioning as its default, then click next.

- Finally review and click Create Data Store.

Creation of Data Pipeline

- In the AWS IoT Analytics console, select Pipelines, then click Create pipeline in the top right corner and name the pipeline testing_pipeline.

- Choose testing_channel for the pipeline source and testing datastore for the pipeline output.

- In Infer message attributes, enter the Attribute name as Unit, Value as deg C, and data type string. then click Next.

- We can see pipeline activity in Enrich, transform, and filter messages, which will help us transform the data using lambda or use different formulas, or we can add or remove data points. as of now leave it as default and click next. Finally, review the pipeline and then click the Create Pipeline option. The pipeline will be created in a short amount of time.

Creation of Dataset

- In the AWS IoT Analytics console, select datasets, then click Create a dataset.

- In the top right corner, on the next page choose the SQL datasets Option then name the Dataset testing_dataset.

- Choose testing_datastore for the Datastore source then click next. The AWS IoT Analytics dataset will query the data from the datastore using SQL.

- Enter SELECT * FROM testing datastore LIMIT 100 in the Author SQL query field and click Next. Click next after leaving the Data selection filter options alone.

- Leave the Set query schedule options alone and press the Next button. Click Next after leaving Configure the findings of your dataset selections alone.

- Configuring dataset content delivery rules will assist in storing the dataset in S3; for the time being, leave that option alone and click next.

- Finally, look over the dataset and select the Create dataset button. The dataset will be available in a short period.

Data Ingestion and Testing

- To ingest the IoT data to AWS IoT analytics navigate to the AWS IoT core and click MQTT test client to choose a publish to a topic option.

- Enter the topic name as testing/121 and message payload as {“temperature”: 27,”humidity”: 60} and click publish, similarly publish the message with different values as {“temperature”:28,”humidity”:65}, {“temperature”: 26,”humidity”:63}, {“temperature”: 22,”humidity”: 62}, {“temperature”:21,”humidity”:61}, {“temperature”:25,”humidity”:65}.

- Then navigate to the testing_dataset in the IoT Analytics, on the top right corner click the Run now option and click the Content option.

- Finally, click on created dataset name so we can see the created dataset.

Conclusion

Thus, we have seen how to create a channel, pipeline, data store, and dataset in AWS IoT Analytics. When we use AWS IoT analytics for IoT applications, we save time and perform analytics on the same data, which we can then display using Quicksight.

Get your new hires billable within 1-60 days. Experience our Capability Development Framework today.

- Cloud Training

- Customized Training

- Experiential Learning

About CloudThat

CloudThat is also the official AWS (Amazon Web Services) Advanced Consulting Partner and Training partner and Microsoft gold partner, helping people develop knowledge of the cloud and help their businesses aim for higher goals using best-in-industry cloud computing practices and expertise. We are on a mission to build a robust cloud computing ecosystem by disseminating knowledge on technological intricacies within the cloud space. Our blogs, webinars, case studies, and white papers enable all the stakeholders in the cloud computing sphere.

Drop a query if you have any questions regarding IoT Analytics and I will get back to you quickly.

To get started, go through our Consultancy page and Managed Services Package that is CloudThat’s offerings.

FAQs

1. What are the steps involved in AWS IoT Analytics?

ANS: – Creation of Channel, creation of datastore, creation of pipeline, and creation of the dataset.

2. What are the applications of AWS IoT Analytics?

ANS: – Smart agriculture, Maintenance that is predicted, Proactive supply replenishment, and Process efficiency evaluation.

WRITTEN BY Vasanth Kumar R

Vasanth Kumar R works as a Sr. Research Associate at CloudThat. He is highly focused and passionate about learning new cutting-edge technologies including Cloud Computing, AI/ML & IoT/IIOT. He has experience with AWS and Azure Cloud Services, Embedded Software, and IoT/IIOT Development, and also worked with various sensors and actuators as well as electrical panels for Greenhouse Automation.

Login

Login

November 15, 2022

November 15, 2022 PREV

PREV

Comments